Phantom Menace The myth of American isolationism

By Peter Beinart

In an op-ed last year in The Washington Post, former Sens. Joe Lieberman and Jon Kyl warned of “the danger of repeating the cycle of American isolationism.” That summer, Post columnist Charles Krauthammer heralded “the return of the most venerable strain of conservative foreign policy: isolationism.”

New York Times columnist Bill Keller then fretted that “America is again in a deep isolationist mood.” This November, Wall Street Journal columnist Bret Stephens will publish a book subtitled The New Isolationism and the Coming Global Disorder.

What makes these warnings odd is that in contemporary foreign policy discourse, isolationism—as the dictionary defines it—does not exist. Calling your opponent an “isolationist” serves the same function in foreign policy that calling her a “socialist” serves in domestic policy. While the term itself is nebulous, it evokes a frightening past, and thus vilifies opposing arguments without actually rebutting them. For hawks eager to discredit any serious critique of America’s military interventions in the “war on terror,” that’s very useful indeed.

TO GRASP HOW little basis today’s attacks on “isolationism” have in reality, it’s worth understanding what the term “isolationism” actually means. Merriam-Webster defines it as “the belief that a country should not be involved with other countries.” The Oxford dictionaries call it “a policy of remaining apart from the affairs or interests of … other countries.”

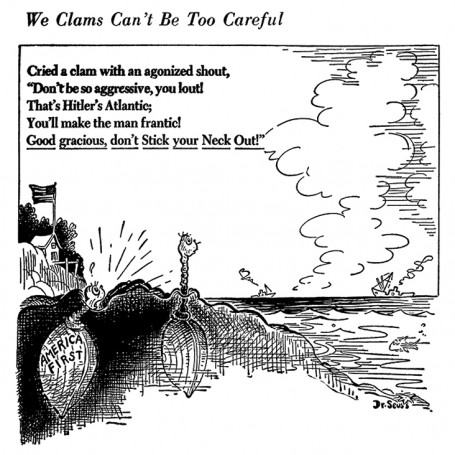

When critics decry isolationism today, they usually map that dictionary definition onto a particular historical period: the 1920s and 1930s. Warnings about isolationism almost always come with the same historical morality tale: America turned inward in the interwar years, and the world went to hell. That’s what makes “isolationism” scary. Like “socialism,” it’s a euphemism for “Hitler and Stalin are coming.”

The problem is that isolationism—as commonly understood—not only doesn’t fit American foreign policy today, it doesn’t even fit American foreign policy in the 1920s and 1930s. There are plenty of valid critiques of how the United States comported itself on the world stage between World War I and World War II. But the claim that America detached itself from other countries is simply not true. In 1921, for instance, President Harding summoned the world’s powers to the Washington Naval Conference and pushed through what some have called the first disarmament treaty in history. In 1924, after Germany’s failure to pay its war reparations led French and Belgian troops to occupy the Ruhr Valley, the Coolidge administration ended the crisis by appointing banker Charles Dawes to design a new reparations-payments system, which Washington muscled the European powers into accepting. American pressure helped to produce the 1925 Treaty of Locarno, which guaranteed the borders between Germany and the countries to its west (though not, fatefully, to its east). In 1930, President Hoover played a key role in the London Naval Conference, which placed further limits on naval construction.

Again and again during the interwar years, the U.S. deployed its newfound economic power to shape politics in Europe. And this overseas engagement wasn’t limited to America’s government alone. Although the United States severely limited European immigration in the 1920s, Americans built the avowedly internationalist institutions that would help guide the country’s foreign policy after World War II. The Council on Foreign Relations was born in 1921. The University of Chicago created America’s first graduate program in international affairs in 1928. And during the interwar years, American travel to Europe expanded dramatically. To be sure, the U.S. in the interwar years was more comfortable intervening economically and diplomatically than militarily. But despite the Neutrality Acts meant to keep the U.S. out of another European war, the Roosevelt administration began sending warplanes and warships to Britain two years before Pearl Harbor. By early 1941, long before America officially entered the war, its ships were already hunting German vessels across the Atlantic.

The only sense in which the United States in the interwar years truly remained apart from other nations lay in its refusal to make binding military commitments, either via the League of Nations or through alliances with particular nations. America wielded power economically, diplomatically, and even militarily, but it jealously guarded its sovereignty. That’s why one influential history of the era dubs U.S. foreign policy between the wars “independent internationalism.” (The last prominent spokesperson for that form of independence was Sen. Robert Taft of Ohio, who during the early Cold War opposed NATO because it required that America pledge itself to Europe’s defense, but who endorsed an all-out war with China to reunify Korea under Western control.) The popular “characterization of America as isolationist in the interwar period,” argues Ohio State University’s Bear Braumoeller in a useful review of the academic literature on the period, “is simply wrong.”

IF CALLING AMERICA isolationist in the 1920s and 1930s is wrong, calling America isolationist today is absurd. The United States currently stations troops in more than 150 countries. Its alliances commit it to defend large swaths of Europe and Asia against foreign attack. Recent presidents have dropped bombs on, or sent troops to, Kuwait, Iraq, Afghanistan, Bosnia, Kosovo, Somalia, Sudan, Syria, Libya, Pakistan, and Yemen. Last month, President Obama sent 3,000 American troops to battle an Ebola outbreak in West Africa. And while Americans fiercely debate particular military interventions and foreign-aid programs, the general presumption that the United States should play a leading role in solving problems far from our shores is largely uncontested in the American political mainstream.

Just how uncontested becomes clear when you examine the foreign policy evolution of Rand Paul, the man frequently held up as the leader of his party’s isolationist wing. As a Senate candidate in 2009, Paul mused about reducing America’s military bases overseas. In 2011, soon after entering the Senate, he suggested eliminating foreign aid. He has also repeatedly insisted that only Congress, and not the president, can declare war (a position that Barack Obama championed when he was in the Senate as well).

Even these views did not make Paul an isolationist. He has never questioned America’s membership in NATO, for instance, or its security alliance with Japan, the cornerstones of America’s post-World War II global role. But in Paul’s early days on the national political stage, his foreign policy instincts did diverge substantially from the ones that held sway in official Washington.

What has happened since shows just how hegemonic America’s globalist consensus actually is. For starters, Paul’s efforts to dial back American interventionism went nowhere. His Senate bill to end foreign aid to Egypt, Pakistan, and Libya got 10 votes. A later bid to reduce America’s overall aid budget from $30 billion to $5 billion garnered 18 votes. This at a time when, according to Bill Keller, America was in “a deep isolationist mood.”

Moreover, Paul’s own views have become markedly more conventional. After first saying that the U.S. should not “tweak” Russia for its aggression in Ukraine, Paul later called for imposing harsh sanctions on Moscow, reinstalling missile-defense systems in Poland and the Czech Republic, and boycotting the Winter Olympics in Sochi. On ISIS, Paul has followed a similar path. After expressing initial skepticism about the value of air strikes, he now says, “If I had been in President Obama’s shoes, I would have acted more decisively and strongly against ISIS.”

Were Paul really an isolationist, his approach to the Middle East would be straightforward: Extricate America from the region and stop giving its people reasons to hate us. But he has explicitly repudiated that view. “I don’t agree that absent Western occupation, that radical Islam goes quietly into that good night, ” he said in a speech last year. “Radical Islam is no fleeting fad but a relentless force.” Paul has even attacked Obama for “disengaging diplomatically in Iraq and the region.”

Instead, over the last year, Paul has developed an approach patterned on the internationalist thinking that influenced foreign policy elites during the Cold War. In a speech last February, Paul said the United States should contain jihadist Islam the way George Kennan envisioned containing Soviet Communism. For Kennan, containment represented an alternative to both isolationism and war. It required buttressing partners that could halt the expansion of Soviet power without trying to roll it back, since that would risk war. Whether one can usefully transfer the concept of containment to the current “war on terror” is questionable. But in invoking Kennan, Paul was expressing a preference for steady, cautious, long-term American engagement in the Middle East—hardly what you’d expect from an isolationist.

Besides containment, Paul’s other watchword is “stability.” “What much of the foreign policy elite fails to grasp is that intervention to topple secular dictators has been the prime source of that chaos,” he said last month. “From Hussein to Assad to Qaddafi, we have the same history. Intervention topples the secular dictator. Chaos ensues, and radical jihadists emerge. … Intervention that destabilizes the region is a mistake.”

Against both liberal interventionists and “neoconservatives” who support intervention to produce more democratic, pro-Western regimes, in other words, Paul wants the United States to support the Arab world’s traditional, comparatively secular autocrats, because at least they keep the region under control. His core argument with hawks such as John McCain and Lindsey Graham is not over whether America should withdraw from the Middle East. It’s over whether America should use its influence there to prop up the old order or usher in something new. That’s why Paul now peppers his speeches with quotes from Colin Powell, Robert Gates, and Dick Cheney circa 1991, policymakers who cut their teeth in the more risk-averse but still undoubtedly internationalist Republican Party of Henry Kissinger and George H.W. Bush. As Jason Zengerle recently pointed out in The New Republic, Paul’s foreign policy has become a fairly standard brand of realism, with some anxiety over unchecked presidential power thrown in.

Critics see this as cynical. Paul, as numerous articles have noted, has grown more hawkish as he’s courted the donors he needs to fund his likely presidential campaign. But the fact that Paul is, by necessity, drawing closer to a foreign policy consensus he once challenged is evidence not of that consensus’s weakness, but of its strength.

THAT CONSENSUS WITHIN the political class is not built upon big-dollar donations alone. There are certainly differences between how party elites want the United States to behave around the world and what ordinary citizens desire. But contrary to much media commentary, isolationism is not only largely absent from foreign policy discourse in Washington. It’s also largely absent from foreign policy discourse among the public at large.

Last December, a poll by the Pew Research Center found that, by 52 percent to 38 percent, Americans wanted the U.S. to “mind its own business internationally,” the largest gap in a half-century. The poll sparked a torrent of journalistic anxiety. “American isolationism,” fretted a Washington Post headline, “just hit a 50-year high.”

But upon closer examination, it becomes clear that Americans don’t actually want their country to “mind its own business” overseas at all. The same Pew poll that supposedly revealed Americans to be isolationists also found that, by a margin of more than 40 percentage points, they believe that “greater U.S. involvement in the global economy is a good thing.” Fifty-six percent of respondents told Pew the United States should “cooperate fully with the United Nations.” Seventy-seven percent agreed that, “in deciding on its foreign policies, the U.S. should take into account the views of its major allies.” And a clear majority opposed the idea that “since the U.S. is the most powerful nation in the world, we should go our own way in international matters.” In that same vein, a recent study by the Chicago Council on Global Affairs found that 59 percent of Americans want the U.S. to maintain its overseas military deployments at current levels. It also found that when told how much the U.S. spends on defense and foreign aid, Americans urge cutting the former but want the latter to go up.

How can a public that endorses greater economic globalization, far-flung military bases, extensive coordination with American allies and the United Nations, and higher foreign aid also say it wants the U.S. to “mind its own business” internationally? The answer lies in the way Washington elites have defined America’s international “business.” In recent years, America’s highest-profile overseas behavior has been its military interventions, either directly or via proxies, in Afghanistan, Iraq, Libya, Syria, and, at one point, potentially Ukraine. When Pew conducted its poll in late 2013, it was those interventions that Americans rejected, not international engagement, or even military action, per se.

The Chicago Council poll teased out the distinction. Like Pew, it uncovered an ostensibly high level of isolationism: Forty-one percent of respondents said it would “be best for the future of the country” if “we stay out of world affairs.” But when the council dug deeper, it found, “Even those who say the United States should stay out of world affairs would support sending U.S. troops to combat terrorism and Iran’s nuclear program. However, many of the conflicts in the press today—for example, in Syria and Ukraine—are not seen by the public as vital threats to the United States.” It’s no surprise, therefore, that since September, when the ISIS beheadings convinced many Americans that the chaos in Iraq and Syria might threaten them, the percentage supporting military action in those countries has shot up.

In important ways, in fact, the standard claim that elites must overcome the ingrained isolationism of ordinary Americans gets things backward. When it comes to working through the U.N. or paying heed to America’s allies, the public is more sympathetic to international cooperation than are many Beltway insiders. In official Washington, for instance, it is virtually taken for granted that America must remain the world’s lone superpower. By contrast, ordinary Americans, according to Pew, overwhelmingly want America to play a “shared leadership role” with other countries. Only 12 percent want America to be the “single world leader,” the same percentage who want America to play “no leadership role” at all.

GIVEN THE OVERWHELMING evidence, both from politicians and the public, that isolationism in America today is virtually nonexistent, why do so many high-profile commentators and politicians depict it as a grave threat? One clue lies in a word that these Cassandras use as a virtual synonym for isolationism: “retreat.” If the subtitle of Bret Stephens’s forthcoming book is The New Isolationism and the Coming Global Disorder, its title is America in Retreat. In their op-ed warning of a new “cycle of American isolationism,” Lieberman and Kyl employ variations of “retreat” or “retrench” six times.

But “isolationism” and “retreat” are entirely different things. Isolationism has a fixed meaning: avoiding contact with other nations. Retreat, by contrast, only gains meaning relatively. The mere fact that a country is retreating tells you nothing about the extent of its interactions overseas. You need to know the position it is retreating from.

Herein lies the rub. In general, the isolationism-slayers are far more comfortable bemoaning American retreat than defending the military frontiers from which America is retreating. That’s because those frontiers, which reached their apex under George W. Bush, were both historically unprecedented and historically calamitous.

To realize how historically unprecedented they were, it’s worth remembering how much more circumscribed America’s military ambitions were under Ronald Reagan. He could not have imagined sending ground troops to invade Afghanistan or Iraq. For one thing, both countries were clients of the Soviet Union. For another, the bitter legacy of Vietnam made sending hundreds of thousands of troops to overthrow a government half a world away inconceivable. During his eight years in office, Reagan invaded only one foreign country: Grenada, whose army boasted 600 troops. In his final year in the White House, when some administration hawks suggested he invade Panama, Reagan adamantly refused. The idea struck him as far too risky.

Equally inconceivable was the idea of deploying American troops on former Soviet soil. One of the disputes that initially led hawks to label Rand Paul an isolationist was the Kentuckian’s 2011 opposition to admitting the former Soviet republic of Georgia into NATO, an issue that put him in conflict with fellow GOP rising star Marco Rubio. But if Paul is an isolationist because he opposes an American military guarantee to defend Georgia, what does that make James Baker, who in 1990 reportedly promised Mikhail Gorbachev that if Moscow allowed Germany to reunify, NATO would not expand “one inch” further east: not even into East Germany, let alone the rest of Eastern Europe, let alone the former Soviet Union itself.

Between Reagan’s presidency and Obama’s, America’s military frontier advanced to fill the gap left by the collapse of Soviet power. Aspects of that expansion turned out well. George H.W. Bush reestablished Kuwait’s sovereignty in the first Persian Gulf War; Bill Clinton helped stabilize southeastern Europe by waging war to stop Slobodan Milosevic’s rampage through Bosnia and later Kosovo; countries such as Poland, Hungary, and the Czech Republic have prospered under NATO protection.

But in Afghanistan and Iraq, America’s forward march turned catastrophic. More than twice as many Americans have died in those two wars than in the September 11 attacks that justified them. A 2013 study by Linda J. Bilmes of Harvard’s Kennedy School of Government estimates that they will ultimately cost the United States between $4 trillion and $6 trillion. As a result, she argues, their financial legacy “will dominate future federal budgets for decades to come.”

Obama has made mistakes in his retreat from those wars. (I’ve been particularly critical of him for disengaging diplomatically from Iraq while Nuri al-Maliki was pushing his country’s Sunnis into the arms of ISIS.) But the notion that Obama should not have retreated—that he should have defended a historically unprecedented military frontier in wars that were causing America debilitating long-term fiscal damage and snuffing out thousands of young American lives, against insurgencies that posed no direct or imminent threat to the United States—is hard to forthrightly defend. Which is why hawks rarely defend it. Instead, they equate retreat with isolationism and isolationism with a fictionalized account of the 1920s and 1930s. And, presto, Obama becomes a latter-day Neville Chamberlain while they become heirs to Winston Churchill rather than to a guy named Bush.

Hawks worried that Barack Obama, or Rand Paul, or the American people have not defended American interests forcefully enough in Iraq, Syria, Ukraine, or Iran can make plenty of legitimate arguments. Calling their opponents “isolationists” isn’t one of them. It’s time journalists greet that slur with the same derision they currently reserve for epithets like “socialist,” “fascist,” and “totalitarian.” Then, perhaps, we can have the foreign policy debate America deserves.

Peter Beinart is is an associate professor of journalism and political science at the City University of New York.